Jupiter-N: The First British Variant of NVIDIA Nemotron Super

We are excited to announce the release of Jupiter-N, a 120 billion parameter reasoning model post-trained from NVIDIA's Nemotron 3 Super, all using 100% renewable energy. We target three objectives in our training: (1) improved agentic capability via uncertainty-curated trajectories; (2) UK cultural alignment via synthetic data grounded in cultural norms; and (3) Welsh language support via parallel corpora from Bangor University and LLM-translated Welsh conversations.

We're also releasing every dataset used to train it as well as a detailed technical report so you can see exactly what went into training this model. We frame this work as a reproducible template for sovereign post-training: substituting cultural knowledge, institutional corpora, and target languages produces an equivalent pipeline for any country.

Alongside Jupiter, reasoning mode is now live on GB1, powered by Jupiter's built-in support for both reasoning and non-reasoning modes.

Why We Built Jupiter

We want to offer a range of models, not just one. Our first model, Locai L1-Large, is built on Qwen 3. Jupiter is built on a completely different base, NVIDIA's Nemotron-3-Super-120B, which gives us a second model family with different strengths, a different architecture, and a different open licence.

There were three reasons we chose Nemotron specifically:

Transparency. All of Nemotron's training data is open source. That means Jupiter is built on fully transparent foundations you can trace what went into the base model and what we added on top. Combined with our own open datasets, the entire pipeline from base to finished model is auditable. For organisations that care about provenance and accountability, that matters.

There was no Welsh variant. Nemotron is a strong base model, but like most open models it has little to no Welsh language capability. Roughly 880,000 people speak Welsh, and as AI becomes more embedded in public services and daily life, that gap becomes a real problem. We trained Jupiter on professional translations from Bangor University's Techiaith project, including Senedd (Welsh Parliament) proceedings and UK legislation, so it can hold a conversation, follow instructions, and reason in Welsh, not just translate into it.

We could make it better. Beyond Welsh, we improved on the Nemotron base in instruction following and agentic ability. Jupiter scores +4.4% on IFBench, meaning it's materially better at doing what you actually ask it to do. We also trained it on carefully selected terminal and coding data, picking the samples where the base model was most uncertain and therefore had the most to learn, achieving +9.1 point gain on the medium tasks from Terminal Bench 2.

On top of all that, we added UK cultural grounding so the model's outputs reflect British context and conventions. Our data curation strategy carefully preserves the base model's capabilities: using our Forget-Me-Not framework, we mix on-policy synthetic replay with off-policy task data to mitigate catastrophic forgetting, and include a mixture of reasoning and non-reasoning traces to maintain Nemotron's hybrid reasoning ability.

Model Summary

| Base Model | NVIDIA Nemotron-3-Super-120B-A12B |

| Total Parameters | 120B (12B active) |

| Architecture | Mamba-2 + Mixture-of-Experts + Attention hybrid |

| Post-Training | LoRA with experience replay |

| Context Length | Up to 1M tokens |

| Languages | English, French, German, Italian, Japanese, Spanish, Chinese + Welsh |

| Reasoning | Configurable on/off |

| Licence | NVIDIA Nemotron Open Model Licence |

Benchmarks

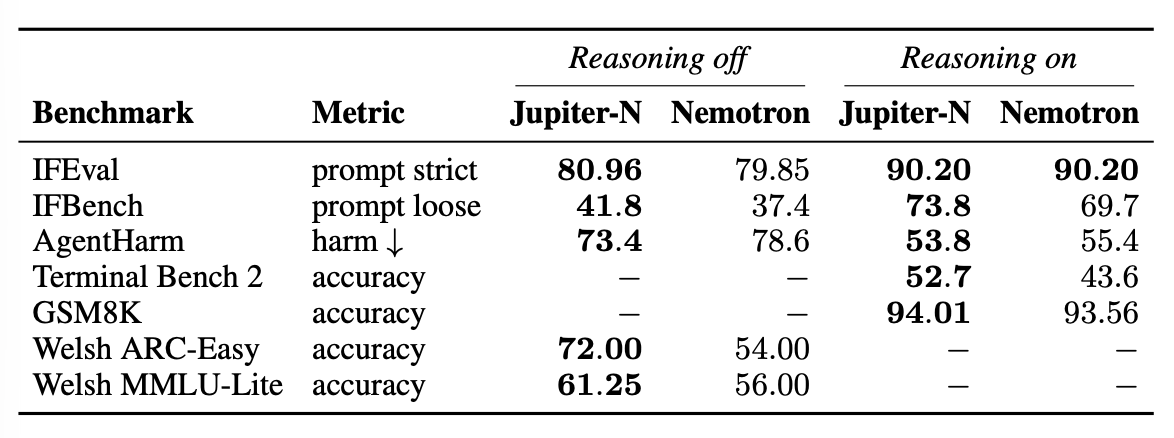

We evaluate Jupiter against the Nemotron-3-Super-120B base.

All values in %. Reasoning disabled. Jupiter and Nemotron Base use temperature 1.0, top-p 0.95.

Jupiter isn't just better in Welsh, it's a better model overall. Welsh ARC-Easy climbs from 54% to 72%, and Welsh MMLU-Lite from 56% to 61.25%. Instruction following improves across both reasoning modes, with IFBench rising by +4.4 (reasoning off) and +4.1 (reasoning on). Terminal Bench 2 improves by +9.1 points. Maths performance on GSM8K stays consistent with the base, confirming that adding new capabilities hasn't come at the cost of existing ones. Safety also improves, with harmful task completion rates falling on the AgentHarm benchmark in both modes.

Training Data

Jupiter was trained on 8× NVIDIA H200 GPUs for a single epoch, which are powered by 100% renewable energy. The post-training mixture is depicted below.

| Dataset | Domain | Samples |

|---|---|---|

| Terminal trajectories | Terminal / agentic | 30,000 |

| CultureBank DPO | Cultural | 1,410 |

| Self-cognition | Identity | 2,000 |

| Synthetic replay (reasoning) | Replay | 2,380 |

| Synthetic replay (no reasoning) | Replay | 5,820 |

| Welsh chat | Welsh instruction following | 20,000 |

| Welsh legislation | Welsh law | 17,900 |

| Senedd proceedings | Welsh parliament | 19,600 |

| Nemotron IF Chat | Instruction following | 15,000 |

| Extended reasoning | Reasoning | 2,060 |

Every dataset is open source and published on our HuggingFace organisation. The full training configuration is documented in the technical report. Anyone can inspect, reproduce, or build on what we've done.

Reasoning Mode Comes to GB1

With Jupiter now available in GB1, reasoning mode comes to GB1 for the first time. Jupiter supports both reasoning and non-reasoning modes — toggle reasoning on and the model will think step-by-step before responding, working through problems, checking its logic, and giving more accurate answers on things like maths, coding, and complex analysis. Toggle it off for faster, more direct replies when you don't need the extra depth.

Reasoning mode is available now on gb1.ai across web and mobile.

Build Your Own Sovereign Model

Jupiter is fully open: the model, the data, and the methodology. We took a world-class open base, applied targeted post-training with modest compute, and produced a variant that's culturally grounded, Welsh-speaking, and measurably better at following instructions and acting as an agent.

The same approach works for any language, institution, or domain.

If you're an enterprise or public sector organisation looking to build your own sovereign AI model, grounded in your language, your data, and your requirements, we'd love to hear from you. Get in touch at locailabs.com or reach out to us directly.

Jupiter-N-120B is live on HuggingFace at locailabs/Jupiter-N-120B. We welcome feedback, particularly from Welsh speakers and anyone working on sovereign AI for their own language or region.

Locai Labs is an independent AI company based in London, building sovereign, private, and sustainable AI. Learn more at locailabs.com.